For years now we've been asking for a new feature in Asterisk that enabled us to get a raw stream of audio out of Asterisk in a usable form that allowed us to integrate with speech to text engines, bot platforms etc and that became a possibility in version 16.6 of Asterisk (https://blogs.asterisk.org/2019/10/09/external-media-a-new-way-to-get-media-in-and-out-of-asterisk/).

Dana is built as a project to show developers how to go about building for Asterisk's SFU but I wanted to show off some other new abilities in Asterisk - namely External Media and ARI applications without Dialplan. So that's what I've done. There are now two other projects on GitHub - a speech to text engine which takes audio from Asterisk and sends it to Google's Speech to Text engine, as well as a very simple ARI application to handle creating SFU conference bridges and spying on individual channels to get each individual participant's audio transcribed.

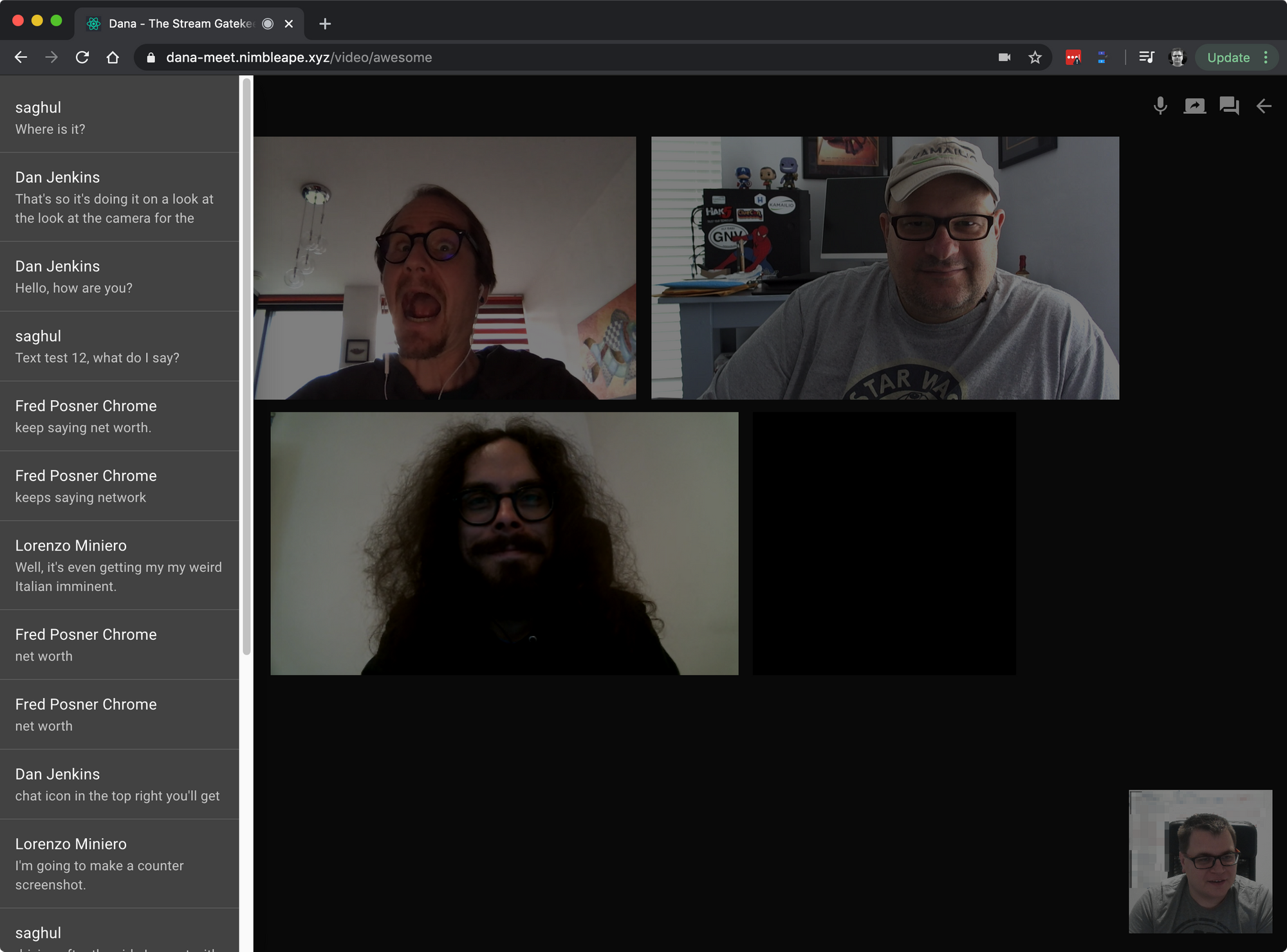

By using MQTT to push transcriptions from the server down to the browser, we're able to get real time, word for word, transcriptions in Dana - its pretty damn cool.

For a limited time check out this version of Dana at https://dana-meet.nimbleape.xyz/ - you'll need to go into the settings and set your name. I'll keep it running for a couple of weeks but of course there are costs involved in using the transcription service from google, as well as the Vultr instance that's currently running Asterisk & the two node services (If you want to enable this demo to remain running consider using my referral link for Vultr)

Want to go run it yourself? Check out the GitHub repos - https://github.com/nimbleape/dana-tsg-rtp-stt-audioserver and https://github.com/nimbleape/dana-tsg-ari-bridge and you'll need a special branch of Dana for the time being - https://github.com/nimbleape/dana-the-stream-gatekeeper/tree/transcription-wip

All 3 of the services use MQTT to link them together (purely because MQTT has a nice Websocket interface for the browser). Input your name into the settings and use any room name at all - we're using Asterisk's new ability to not have any Dialplan listed in extensions.conf and instead, against our WebRTC user, we've set a context that's automatically created for ARI apps - so there's no dialplan extension matching string involved here - it just goes straight into our conference bridge ARI app - look at https://blogs.asterisk.org/2019/03/27/stasis-improvements-goodbye-dialplan/ if you want more detail on this.

The Audio Server is written in Node and takes data from a UDP socket, forms it into a Node.js stream and pipes it into a Google Speech to Text stream. Sounds simple doesn't it? It was far from simple due to the nature of UDP, asterisk servers outside of my network, needing to forward UDP packets over a UDP/TCP tunnel etc etc; let alone everything that George Joseph had already figured out in his implementation - https://github.com/asterisk/asterisk-external-media - the key difference between George's and mine is the ability to handle multiple streams and that's done by getting the source port of the media out of asterisk and sending it to the audio server so we can say Participant X had a source port of 12345, associate their media with Bridge Y and Participant X. I can't wait until the TCP transport (or better yet, Websockets) is added to the External Media API - that should make developing solutions far easier (if you'e OK with sending audio over TCP)

It's all been tested on Chrome only and there are still a few issues with video tracks - feel free to create issues on those GitHub repos on anything odd that you spot.