With live transcription of media becoming easier and easier to implement thanks to Google, Microsoft, IBM and the like; we're seeing live transcriptions appear as captions on live streamed video on the web at little cost to the developer. Whether thats a conferencing system or YouTube; video on the Modern Web is delivered using a <video> element thanks to HTML5 video that doesn't require plugins and flash.

It's a huge benefit for everyone who views these streams; I myself am not hard of hearing, I don't have a disability making it impossible for me to hear the world but I do find subtitles a fantastic addition to any video; I have a son who likes to make a lot of noise, I have a son who's in bed at a particular time and so audio output levels drop and I also find it difficult some of the time to hear what's being said by others generally. Android 10 on pixel devices recently got the ability to have closed captions automatically appear on top of any video playing on screen. Being able to automatically translate someone speaking a foreign language and display subtitles in your own language is hugely beneficial too. People use closed captions/subtitles for many reasons - you don't have to be deaf to need them.

With recently creating the demo Dana project for Asterisk's SFU, as well as Asterisk's recent External Media APIs making getting real time audio available outside Asterisk easier than ever before, I wanted to add live, real time captioning for each individual participant in a conference to Dana; a demo app that showcases using Web Technologies in the right way; which means using WebVTT captions on a <video> element. Dana is a WebRTC application so I'm purely looking at WebRTC media, not HTTP streaming.

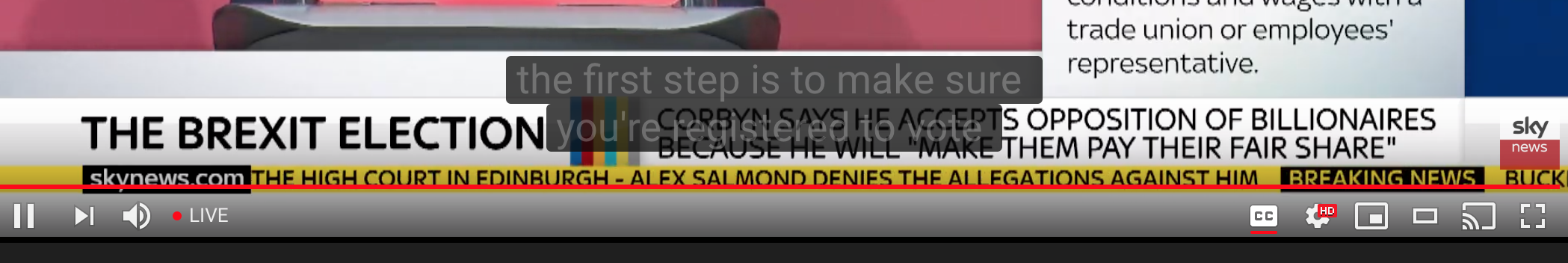

This is where I found a 3 year old issue on the w3c github repo talking about the very thing I wanted - it was still open and unresolved unfortunately. So how does YouTube for example do it's live captioning like in the image above? Quite simply it's a <div> element placed above a <video> element with it's content changed dynamically. It works really quite well; you get full control over everything you'd ever want but it doesn't use the standards that empower the Web.

In recent years we've seen huge strides forward with the Modern Web. Project Fugu is moving the web forward and bringing the web ever closer to the capabilities of native applications - we should absolutely be looking at enabling web applications to work with web standards for any user who chooses (and those that don't get a choice) to use subtitles/closed captions.

Header image from https://www.flickr.com/photos/45909111@N00/8686204832